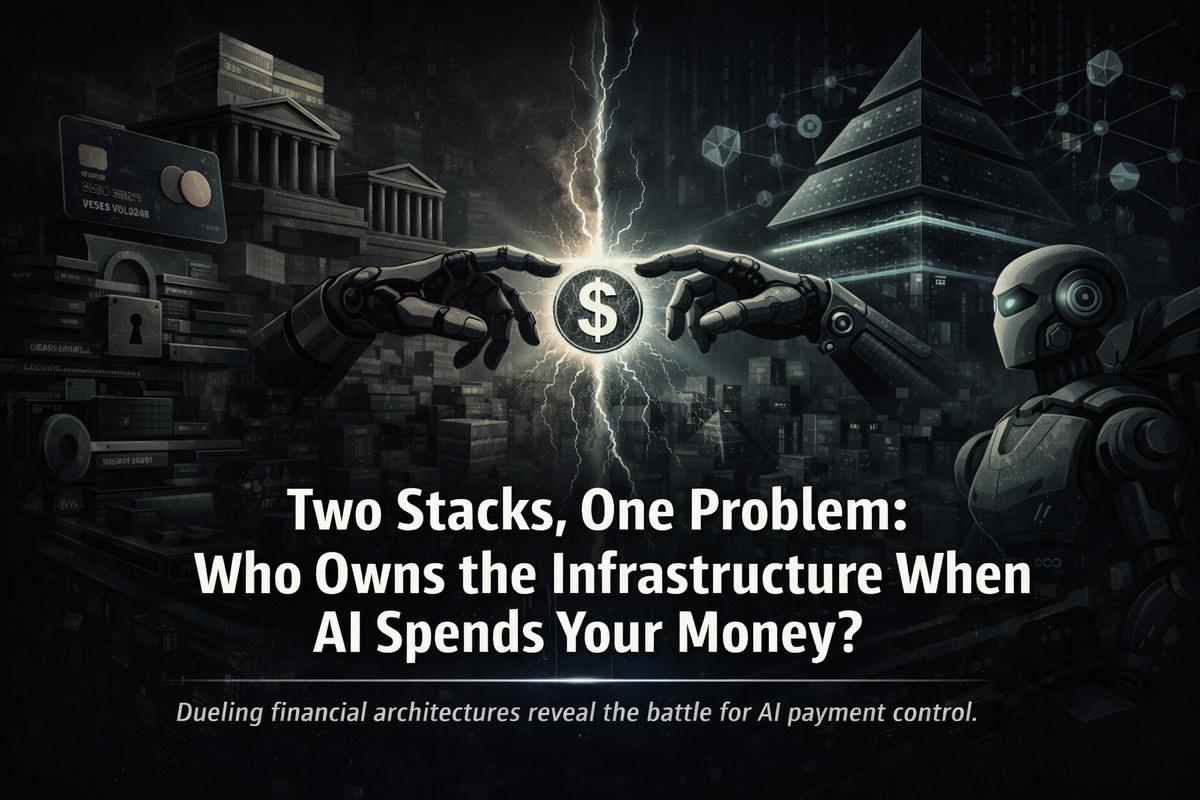

Two Stacks, One Problem: Who Owns the Infrastructure When AI Spends Your Money?

AI agents are about to start making payments on your behalf. The payments industry is split on how to make that safe. Two fundamentally different architectures are emerging, and the gap between them reveals what's really missing.

For most of human history, buying something required a person, a decision, and a payment. The transaction was atomic. Then came the platform era, and merchants started paying roughly 2.9% to a card network for the privilege of verifying that person's identity, and we all accepted this as the cost of trust at scale.

Now we are entering a third era, the agentic economy, and the toll is once again rising. OpenAI has reportedly signaled a plan to charge merchants 4% for transactions processed through ChatGPT's agent checkout. Think about what that means for a moment. If your AI agent replaces you in the commerce loop, discovering products, comparing merchants, executing purchases, then whoever controls the payment rail for that agent takes a cut of every transaction. That is why Visa, Mastercard, Google, Stripe, and Coinbase have all shipped agentic payment infrastructures in a sprint to gain control of this layer, starting only twelve months ago.

But beneath the commercial positioning lies an unsolved structural problem which is far more consequential than who captures the margin. When an AI agent overspends, gets manipulated by a fraudulent merchant, or executes a purchase you never quite intended, who is actually accountable?

I wrote recently about how admiralty courts in the 1840s turned ships into legal persons to solve exactly this kind of problem, holding an autonomous agent accountable when its principal had no way to oversee or communicate with it. Historically, it was a problem of distance, but today it is a problem of velocity, as agents operate at a scale and speed we simply cannot follow. This becomes a real problem when you realise that the existing infrastructure only allows us to hold agents accountable retroactively.

Arjun Wadwalkar, writing on the governance gap in agentic payments in The AI Innovator, framed the stakes precisely:

We have created software that behaves like an economic actor, but given it "no legal container, balance sheet, or sovereign accountability."

Financial infrastructure has KYC for individuals and KYB for businesses, but it has nothing equivalent for AI agents. No persistent identifiers. No portable reputation. No clear chain of accountability. Fime, the payments consultancy, calls this a "responsibility vacuum" and asks the obvious question: is liability with the developer, the wallet issuer, or the API provider? As they put it, "we are entering a legal fog where the old rules do not map."

The payments industry has started calling the missing piece Know Your Agent, or KYA, and the convergence is striking: Mastercard now requires that only registered agents can transact through its Agent Pay infrastructure, Trulioo and PayOS have published a formal KYA framework built around a "Digital Agent Passport," and Tomer Jordi Chaffer proposes identity verification and accountability mechanisms for decentralised AI agents.

While there is broad agreement that this problem is real, the consensus fractures over where in the stack accountability should be anchored. TradFi believes that identity and accountability belong at the top, in standards and institutional oversight, all layered onto existing rails. Crypto believes it belongs further down, embedded in the wallet infrastructure, or in some cases directly in the settlement layer itself.

As such, two fundamentally different architectures are emerging, and they start from opposite ends of the stack.

The TradFi approach: watch, log, adjudicate

The TradFi world sees the answer in new standards layered onto existing payment rails. A cluster of new protocols from Google (AP2), OpenAI and Stripe (the Agentic Commerce Protocol), Mastercard (Agent Pay), and Visa (Trusted Agent Protocol) all share the same DNA: they define how agents discover, select, purchase, and settle transactions within the existing card and account-to-account infrastructure. Where it gets interesting is in how they propose to handle accountability. Mastercard's Verifiable Intent framework, for example, creates a tamper-proof record of user instructions that can serve as a legal basis when disputes arise.

I find it useful to think of this as a Common Law approach to agent accountability: reactive, precedent-based, built on observation and after-the-fact adjudication. The agent acts, the system records what happened, and if something goes wrong, humans review the evidence. Stripe's description of its own model captures the underlying philosophy well: the AI agent carries the buyer's identity and payment context into the transaction, but the human remains the accountable principal throughout.

The commercial logic is clear: billions of card credentials already exist; dispute resolution mechanisms are well understood; and regulatory frameworks like PSD2 are already in place. Extending all of this for agents is clearly the path of least resistance.

However, the weaknesses are equally obvious once you look at it structurally. These systems were designed for a world where a human clicks the "buy" button. Fraud models assume human behavioural patterns, while authentication mechanisms like 3D Secure confirm who the human is but say nothing about which agent acted on their behalf. As Wadwalkar argues, "we are attempting to govern a new category of actor using tools built for the old one."

These structural weaknesses are particularly dangerous at scale, given how systemic risks tend to arise. In May 2010, for example, it is widely speculated that a human "fat finger" mistake triggered algorithmic trading systems to go haywire and cause what has later been coined "the flash crash," where the US stock market lost roughly a trillion dollars within minutes.

Now imagine the same dynamic applied to agentic commerce. If millions of AI agents operate on the same checkout protocols and a logic error or adversarial manipulation cascades, a system built on after-the-fact review provides a detailed recording of the crash, and little else.

A different architecture: encode the constraints

There is a fundamentally different way to approach this, and it needs more attention from TradFi than it is currently getting. Instead of passively watching the agent and retroactively adjudicating when things go wrong, you build the constraints into the infrastructure itself so that the unintended action becomes mechanically implausible.

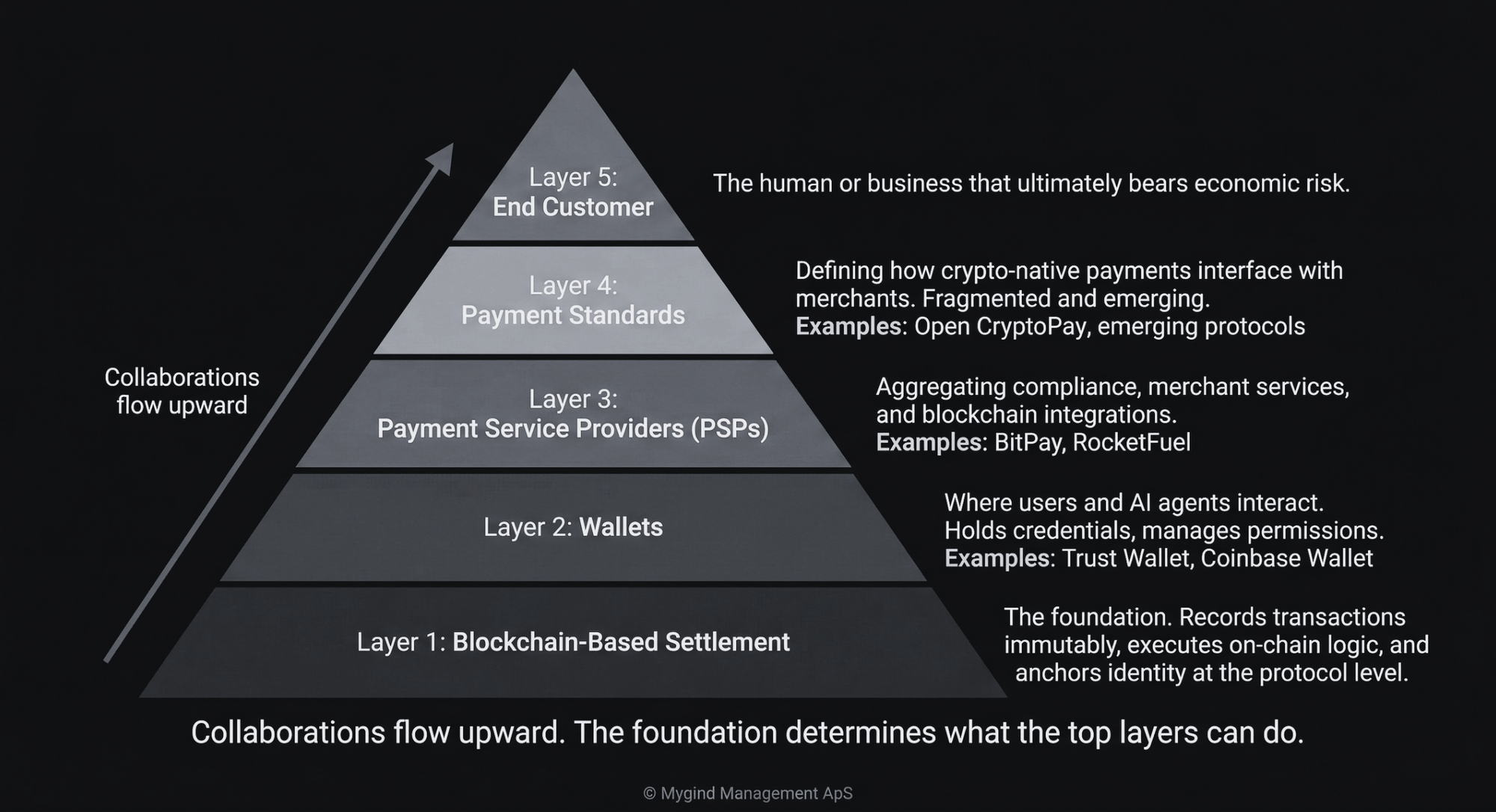

This is the crypto-native approach, and to understand how it actually works, I have developed a five-layer framework that covers the full stack from settlement at the base to the end customer at the top. At the foundation is the blockchain-based settlement layer, and from there the stack builds upward through wallets (Layer 2), payment service providers (Layer 3), and payment standards (Layer 4) to reach the end customer (Layer 5) at the top.

Think of blockchain-based settlement as Napoleonic Code for agentic payments: prescriptive, encoded, where everything is forbidden unless the code explicitly allows it. Delegation rules are programmable and live on-chain, including spending limits, approved counterparties, time-bound permissions, and automatic revocation. Settlement is on-chain, always on, with no batch windows.

This is rapidly becoming reality, as Coinbase's Agentic Wallets, which launched barely a month ago, give AI agents non-custodial wallets with programmable transaction limits, effectively shifting the model from approving individual transactions to setting policies upfront. At the same time, their x402 protocol goes even further by enabling machine-to-machine micropayments over HTTP, allowing agents to pay for services programmatically and in real-time without touching traditional payment rails.

While Coinbase demonstrates remarkable vertical reach by also running their own Layer 1 blockchain (Base), they still rely on payment service providers to reach merchants. The strategic implication is directional, as collaborations flow upward toward the customer. Settlement layers support wallets, wallets integrate with PSPs, PSPs connect to payment standards, and standards reach the end customer.

A blockchain that wants to participate in the agentic commerce economy should integrate broadly at the wallet and PSP layers rather than chasing individual payment standards, because standards are fragmented and constantly emerging, while wallet and PSP partnerships compound across the entire ecosystem.

Just as the TradFi approach carries structural weaknesses, the crypto stack has its own critical gap.

An AI agent with a wallet and no verifiable identity is, to borrow from the 18th-century jurist Edward Thurlow, an entity with "no soul to be damned and no body to be kicked." It can execute trades, trigger liquidity events, or facilitate sanctions evasion, and then vanish into the pseudonymity of the chain.

The crypto community itself recognises this gap. The term "permissionless void" has started circulating after Blockeden coined it to describe an environment where agents transact freely but nobody can verify who they are or who they represent. And there is a deeper paradox still. On-chain constraints can govern an agent's wallet, but they cannot govern an agent's learning, a point Botao Amber Hu's recent research makes compellingly.

Same problem. Opposite direction.

As a recent Tiger Research analysis published on CoinGecko concluded, Big Tech favours closed, controlled systems, while crypto advances open, protocol-based models. The contrast runs deeper than technology, and it extends well beyond Big Tech into TradFi.

What we are looking at is a philosophical divide between two fundamentally different theories of accountability: one reactive and precedent-based, the other prescriptive and encoded by design. The following comparison maps where these two approaches diverge across every critical dimension.

| TradFi Stack | Crypto-Native Stack | |

|---|---|---|

| Philosophy | Common Law: reactive, precedent-based | Napoleonic Code: prescriptive, encoded by design |

| Identity | Bank KYC extended with agent tokens | Wallets + emerging on-chain KYA (e.g. ERC-8004) |

| Delegation | Verifiable Intent logs, spend limits via PSPs | Programmable permissions encoded on-chain |

| Accountability | Human remains principal. Agents are tools. | Protocol-level rules. Unresolved for emergent behaviour. |

| Settlement | Batch processing, settlement windows | On-chain, near-instant, always on |

| Core weakness | Built for humans. Accountability is retrofitted. | Built for trustlessness. Real-world identity is missing. |

What I find genuinely striking when comparing the TradFi stack with the crypto-native stack is how complementary the weaknesses are. What TradFi lacks, namely programmable settlement, encoded delegation, and open standards, crypto has natively. What crypto lacks, namely real-world identity, regulatory integration, and institutional accountability chains, TradFi provides through existing banking relationships.

However, neither is sufficient alone, and that is precisely what makes some form of convergence inevitable.

The Digital Hull

The admiralty courts of the 1840s solved the accountability problem by inventing a legal container for autonomous action. They turned ships into persons. The agentic economy needs a digital equivalent, in the form of an infrastructure where AI agents are simultaneously autonomous and accountable.

As I see it, that infrastructure must combine key elements which can be derived by mapping the weaknesses of each stack across the dimensions:

| Requirement | What it solves for TradFi | What it solves for Crypto |

|---|---|---|

| Programmable settlement: always-on, real-time, compatible with continuous agent activity | Eliminates batch processing and settlement windows | Already native to on-chain infrastructure |

| Verifiable identity linked to real-world accountability: every agent traceable to an accountable person or entity, auditable and enforceable | Already exists through banking relationships and KYC | Fills the "permissionless void," anchors agents to accountable entities |

| Encoded delegation rules: boundaries governing what an agent can do, embedded in the transaction layer rather than in policy documents the agent never reads | Replaces after-the-fact policy review with constraints that travel with the agent | Already native through on-chain programmable logic |

| Open standards: protocol-based rather than platform-controlled | Prevents lock-in to proprietary agent checkout platforms | Prevents fragmentation across competing protocols |

The pattern is clear: for each requirement, one stack already has it natively while the other lacks it. The complete infrastructure needs programmable settlement, verifiable identity, encoded delegation, and open standards. It takes what each side already does well and fills the gap the other cannot.

History suggests how this will unfold. New technologies initially operate within the constraints of old infrastructure, much like early automobiles had to navigate dirt roads built for horses. For a while, the old infrastructure appears adequate. But eventually the new technology's requirements become so fundamentally different that an inversion occurs: the roads get paved for cars, and the horses adapt. What was once the adaptation becomes the foundation.

I believe we are approaching that infrastructure inversion point for payments. TradFi is currently trying to make agentic commerce work on rails designed for humans, and for a while it will work. But as agent-initiated transactions grow in scale, speed, and complexity, the four requirements above will increasingly favour infrastructure that was designed for agents from the ground up.

The question for every builder in payments is the same one the shipowners faced centuries ago: are you building a system for the current state of affairs, passively watching agents, or are you building for what comes next, making agents structurally accountable? Because those who stay on today's TradFi rails will be subject to tomorrow's centralised tolls. The 4% fee that OpenAI has alluded to is probably just the beginning. And if that sounds absurd, ask anyone who builds on the Apple App Store what 30% feels like.

Epilogue: At least one blockchain has already built what the convergence enabling the infrastructure inversion requires. Stay tuned.